文章来源于互联网:HuggingFace工程师亲授:如何在Transformer中实现最好的位置编码

一个有效的复杂系统总是从一个有效的简单系统演化而来的。——John Gall

import torchimport torch.nn as nnfrom transformers import AutoTokenizer, AutoModelmodel_id = "meta-llama/Llama-3.2-1B"tok = AutoTokenizer.from_pretrained(model_id)model = AutoModel.from_pretrained(model_id)text = "The dog chased another dog"tokens = tok(text, return_tensors="pt")["input_ids"]embeddings = model.embed_tokens(tokens)hdim = embeddings.shape[-1]W_q = nn.Linear(hdim, hdim, bias=False)W_k = nn.Linear(hdim, hdim, bias=False)W_v = nn.Linear(hdim, hdim, bias=False)mha = nn.MultiheadAttention(embed_dim=hdim, num_heads=4, batch_first=True)with torch.no_grad(): for param in mha.parameters(): nn.init.normal_(param, std=0.1) # Initialize weights to be non-negligibleoutput, _ = mha(W_q(embeddings), W_k(embeddings), W_v(embeddings))dog1_out = output[0, 2]dog2_out = output[0, 5]print(f"Dog output identical?: {torch.allclose(dog1_out, dog2_out, atol=1e-6)}") #True ),让我们开始设计和迭代编码方案吧。

),让我们开始设计和迭代编码方案吧。 IntegerEncoding

IntegerEncoding 。

。 BinaryEncoding

BinaryEncoding

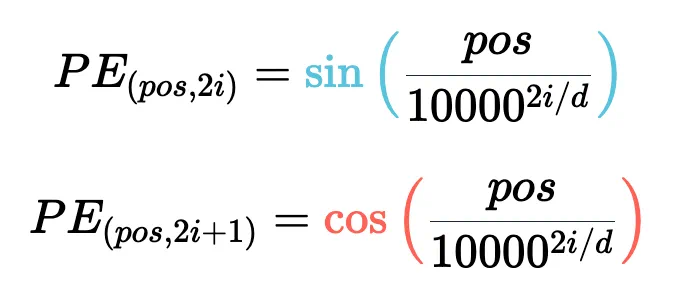

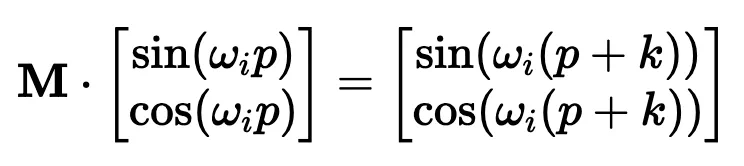

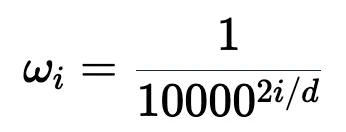

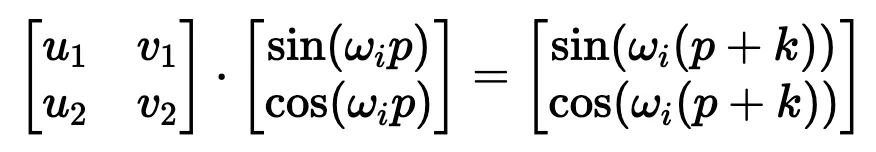

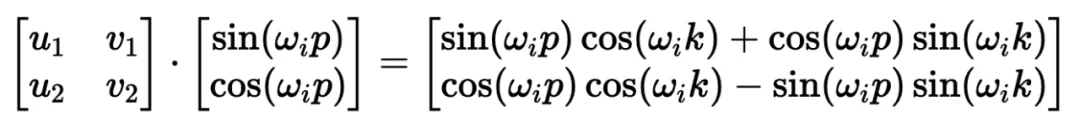

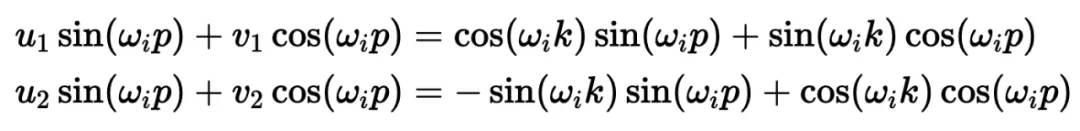

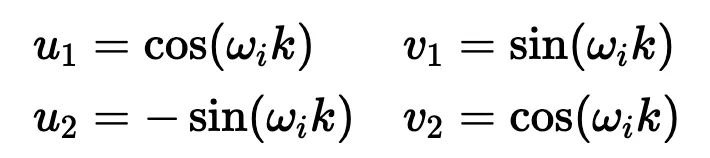

)。要理解正弦和余弦如何配合使用才能产生这种线性关系,我们必须深入学习一些三角学知识。

)。要理解正弦和余弦如何配合使用才能产生这种线性关系,我们必须深入学习一些三角学知识。

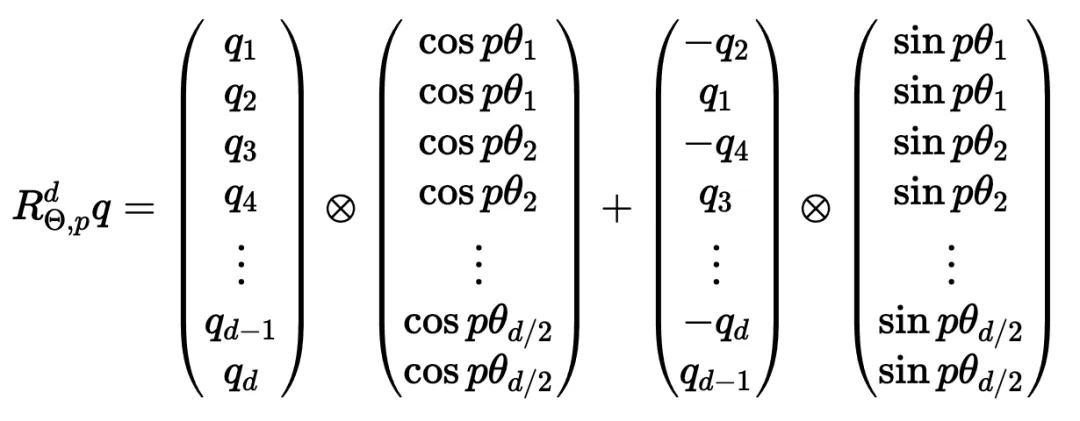

坐标对。这可能看起来很直观,毕竟,我们之前几乎是任意地对组件进行配对。然而,这会是一个错误!

坐标对。这可能看起来很直观,毕竟,我们之前几乎是任意地对组件进行配对。然而,这会是一个错误!